What Arcee Is and Why It’s Making Noise

Arcee AI is a small but fast-moving open source AI model company that has quietly built a reputation for punching well above its weight. Founded with a focus on small language models and model merging techniques, Arcee has released a series of compact, high-performing models that consistently benchmark near or above much larger competitors. They are not backed by the same billions as OpenAI or Google, and that is precisely what makes them interesting.

What separates Arcee from the hyperscaler crowd is their philosophy: instead of scaling parameters to the moon, they invest in specialization, fine-tuning, and architectural efficiency. Their models are designed to be deployed in real environments — on-premise, in private clouds, and inside enterprise infrastructure — not just as API calls to a third-party server. For industries where data sovereignty and latency matter, that distinction is enormous.

Industry watchers are paying attention because Arcee’s results challenge a core assumption that has driven enterprise AI spending for years: that bigger models are always better models. For manufacturing leaders evaluating AI for manufacturing, Arcee’s approach is a direct signal that the playbook is changing.

The Case Against Big AI Models in Operations

There is a seductive logic to buying AI from a hyperscaler. The brand names are familiar, the sales decks are polished, and the benchmarks look impressive. But when quality managers and operations leaders actually try to deploy these massive systems inside a factory or a supply chain environment, the reality is rarely as clean as the pitch. Large models are expensive to run, slow to respond, and difficult to customize for the specific vocabulary and edge cases of your operation.

Latency is a practical problem that rarely gets enough attention in vendor conversations. A general-purpose large language model processing a quality inspection query through a cloud API introduces round-trip delays that can disrupt automated workflows. For a production line making decisions in near real-time, even a two-second delay compounds into meaningful throughput loss. The bigger the model, the more compute it consumes per inference — and those costs scale brutally when you are running thousands of queries per shift.

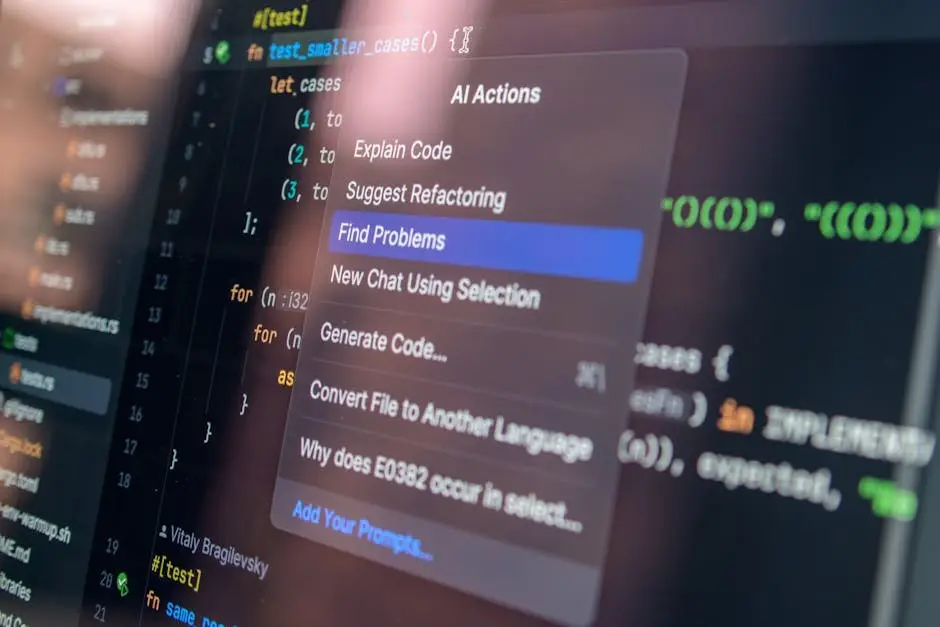

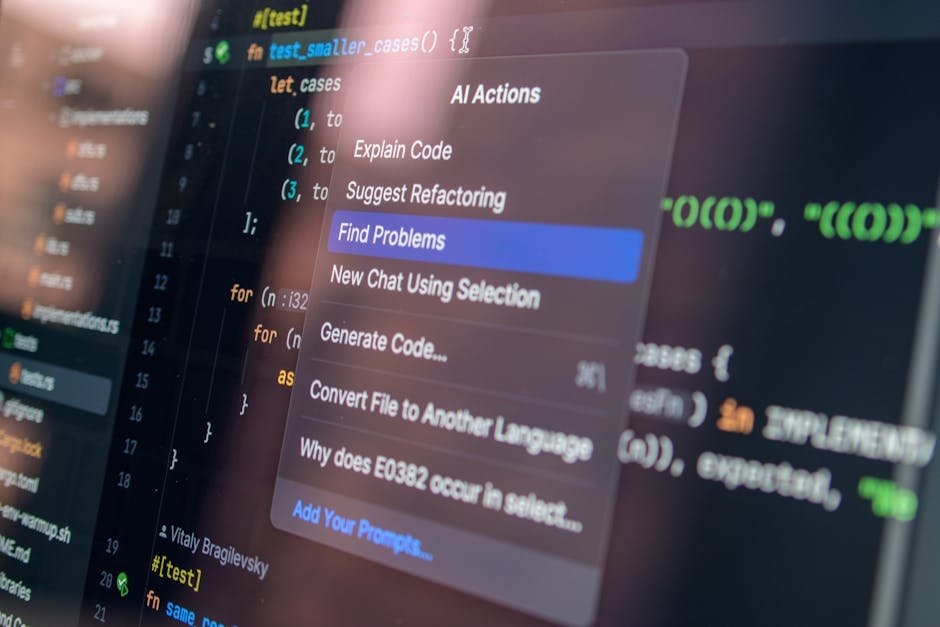

Vendor lock-in is the slow-burn risk. When your quality management system is tightly coupled to a proprietary enterprise AI deployment from a hyperscaler, switching costs grow every quarter. Your team learns their interface, your integrations are built around their API schemas, and your data flows into their infrastructure. Open source AI model alternatives force a different conversation — one where you own the stack and retain the flexibility to adapt as your operations evolve.

What Smaller, Specialized Models Actually Deliver

The performance case for small language models in manufacturing and quality contexts is stronger than most executives realize. A well-fine-tuned compact model trained on domain-specific data — think inspection reports, non-conformance records, supplier quality documentation — will consistently outperform a generic large model on your actual tasks. It knows your terminology, it understands the context of your quality codes, and it does not waste inference cycles processing knowledge that is irrelevant to your operation.

Cost efficiency is not a minor footnote here — it is a strategic advantage. Running a 7-billion or 13-billion parameter model on modest on-premise hardware costs a fraction of what continuous cloud API usage costs at scale. Several manufacturers who have moved from hyperscaler APIs to locally hosted open source AI model deployments report cost reductions of 60 to 80 percent on their AI inference spend. That freed-up budget does not disappear; it funds more automation, more data integration, and more value.

On-premise deployment also solves the data privacy problem that stalls many AI for manufacturing projects before they start. Proprietary manufacturing processes, supplier pricing data, and product specifications are assets you cannot afford to leak into a third-party model’s training pipeline. When you run an open source AI model on your own infrastructure, your data stays inside your network. Compliance teams relax. Legal teams stop blocking projects. Deployment timelines shorten significantly.

How to Evaluate Any AI Model for Your Operations

The right evaluation framework saves you from buying a solution before you understand your problem. Start with fit-for-purpose testing: take a real dataset from your own operation — actual quality records, defect descriptions, process parameters — and run candidate models against it. Do not let vendors run the tests. Your internal benchmark, even if it is rough, will tell you more than any published leaderboard. An open source AI model that scores well on your data beats a famous model that scores well on someone else’s data.

Total cost of ownership is the number that most AI evaluations get wrong. The license or API cost is the visible part; the hidden costs are integration engineering, ongoing fine-tuning, infrastructure, and the internal time your team spends maintaining the connection between the AI system and your production systems. Build a 24-month TCO model before you commit to any platform. For enterprise AI deployment, the difference between a well-scoped open source deployment and a hyperscaler subscription can easily be seven figures over two years.

Use this practical checklist when assessing any AI model for your operations:

- Fit-for-purpose: Does it perform well on your actual operational data, not just public benchmarks?

- Data privacy: Can it run fully on-premise or in your private cloud with no external data transfer?

- Integration readiness: Does it expose standard APIs that connect to your MES, ERP, or SCADA systems?

- Fine-tuning flexibility: Can your team adapt the model as your processes and data evolve?

- Total cost of ownership: Have you modeled inference costs, infrastructure, and engineering time at scale?

- Vendor dependency: What happens to your operation if the provider changes pricing or discontinues the model?

Integration readiness deserves particular emphasis for manufacturing environments. Your AI model does not live in isolation — it needs to read from sensors, write to quality management systems, and trigger downstream actions. An open source AI model with clean, documented APIs and active community support will integrate faster and more reliably than a closed system where every custom connection requires vendor involvement and a change order.

Ready to find AI opportunities in your business?

Book a Free AI Opportunity Audit — a 30-minute call where we map the highest-value automations in your operation.

Conclusion

The rise of Arcee and the broader open source AI model movement delivers a straightforward message for manufacturing and quality leaders: the best AI model is not the most famous one, and it is not the largest one. It is the one that solves your specific operational problem reliably, fits inside your data governance requirements, and delivers a return you can actually measure. Big Tech AI is not wrong for every use case — but it is wrong for far more manufacturing use cases than the sales narrative suggests.

Arcee’s results prove that a focused, well-engineered small language model can outperform a bloated general-purpose system on the tasks that actually matter to your operation. The manufacturers who recognize this early will deploy faster, spend less, and build AI capabilities they genuinely own. The ones who wait for the perfect hyperscaler solution will spend another two years in pilot purgatory while their competitors automate past them.

Start with clarity about your problem, apply the evaluation criteria above, and resist the pull of brand recognition as a proxy for fit. The right open source AI model for your operation exists today — the only question is whether you have a structured process for finding it. That is exactly what our Free AI Opportunity Audit is built to deliver.