Why Manufacturing Is Repeating Software’s Biggest AI Mistake

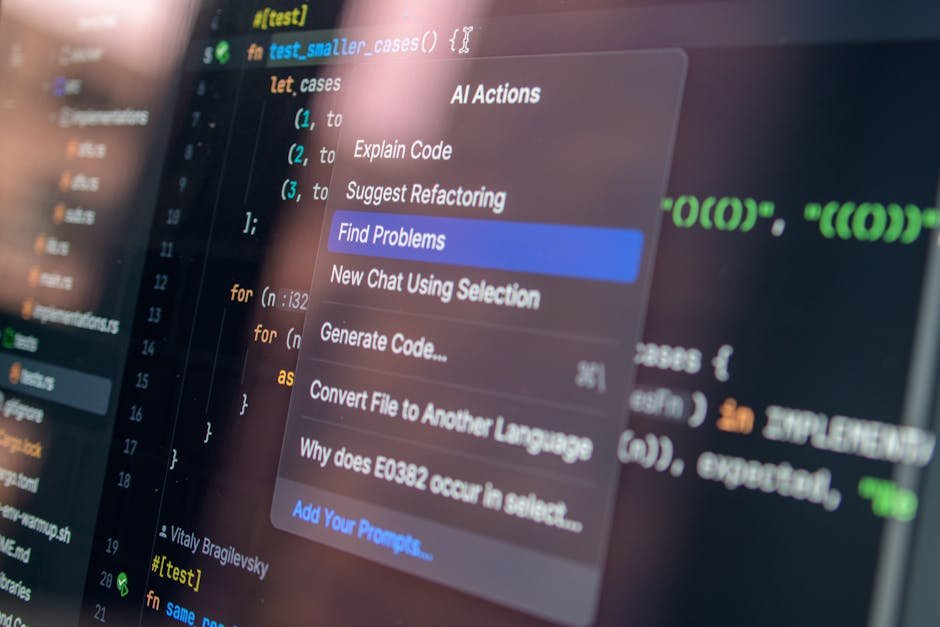

Software teams spent three years learning a brutal lesson: deploying AI tools without central oversight creates fragmentation, rework, and zero measurable ROI. Individual developers adopted GitHub Copilot, Tabnine, and a dozen other tools independently. Teams ran parallel workflows. No one standardized prompts, measured outcomes, or connected tooling to business results. The productivity gains were real but invisible at the org level — because no management layer existed to capture and compound them. Manufacturing operations are walking into the exact same trap right now.

The parallel is not superficial. Quality managers are running ChatGPT pilots in one department while operations runs a separate computer vision experiment on a different line. ERP and MES systems sit untouched by either effort. Nobody owns the integration question, the data standards question, or the ROI measurement question. The result is a collection of isolated experiments that individually look promising and collectively deliver nothing. AI software development success happened when organizations stopped treating AI as a tool and started treating it as an infrastructure problem. Manufacturing needs to make the same shift.

This article makes one argument clearly: the productivity gains AI delivers in software development are being left on the table in manufacturing, not because the technology does not translate, but because most plants lack the centralized AI management layer that makes any of it compound. The sections that follow show you what that layer looks like, how to build it, and what it costs you to wait.

What AI’s Success in Software Development Actually Looks Like — By the Numbers

Documented productivity lifts: code review, QA, documentation automation

The numbers from software are not speculative. GitHub’s internal study of Copilot found developers completed tasks 55% faster when using AI assistance. McKinsey research documented a 20–45% reduction in time spent on code generation and documentation across enterprise software teams. Separate data from Google’s internal AI tooling program showed QA cycle times dropping by over 30% when AI was embedded directly into the testing pipeline — not added as an afterthought.

These gains have direct manufacturing analogues. Code review maps to first-article inspection review and deviation documentation. QA automation maps to inline defect classification and root cause tagging. Documentation automation maps to work instruction generation, audit trail creation, and corrective action reporting. The tasks are different. The underlying pattern — AI handling structured, repetitive cognitive work while humans focus on judgment calls — is identical.

The critical detail buried in every software productivity study is that the highest gains came from teams where AI was woven into the existing workflow, not running alongside it. Developers who had to switch contexts to use an AI tool saw modest gains. Developers whose IDE surfaced AI suggestions inline saw transformational ones. The same principle applies to shop floor operations.

The management layer that makes these gains repeatable and scalable

No high-performing software engineering organization achieved AI software development success by letting each developer choose their own tools without coordination. The teams that hit the top-quartile productivity numbers — companies like Shopify, Stripe, and Thoughtworks — built internal AI councils, standardized their toolchains, and measured outcomes against defined engineering KPIs. They treated AI adoption as an operational capability, not a personal productivity hack.

In manufacturing terms, this management layer is the equivalent of a centralized quality management system applied to AI tooling itself. It defines which tools get deployed, how they connect to existing systems, what success looks like numerically, and who is accountable when results fall short. Without this layer, every department runs its own experiment, no one shares learnings, and the organization as a whole pays for the same mistakes four times across four different lines.

Why quality outcomes improve when AI is embedded in process — not bolted on

Software teams that bolted AI onto existing workflows as a supplemental step saw marginal gains. Teams that redesigned workflows around AI capabilities saw compounding ones. The distinction matters because bolt-on AI requires humans to remember to use it, manually transfer outputs between systems, and reconcile AI-generated results with existing records. Each handoff is a failure point.

Embedded AI eliminates the handoff. In manufacturing, this means AI-driven inspection results flowing directly into the MES, not into a spreadsheet that someone manually uploads at the end of the shift. It means AI-generated corrective action drafts appearing inside the quality management system, not in a separate SaaS tool that requires copy-paste to close the loop. Process-embedded AI is a governance decision before it is a technology decision — and most plants have not made that governance decision yet.

Central AI Project Management: The Missing Infrastructure in Most Plants

What centralized AI governance looks like in practice

Centralized AI governance is not a committee that approves every prompt. It is a defined function — owned by a specific person or small team — responsible for four things: tool selection standards, integration requirements, outcome measurement, and cross-department knowledge sharing. In a 500-person plant, this can be a single operations technology lead with a quarterly steering group. In a multi-site enterprise, it scales to a formal Center of Excellence. The structure scales; the function does not change.

Practically, this means the plant has a maintained inventory of every AI tool in use, the data each tool touches, the systems it connects to, and the KPI it is supposed to move. It means new AI tool requests go through a lightweight evaluation against that inventory before deployment, so you do not end up with three different AI tools doing the same thing on different lines without talking to each other. It means someone is accountable for the aggregate ROI number, not just the pilot result.

Companies like Siemens and Bosch have published elements of their internal AI governance frameworks, and the consistent theme is that governance starts as a coordination function, not a control function. The goal is to accelerate adoption by removing duplication and integration friction — not to slow it down with approval bureaucracy.

How to assign ownership without creating bureaucratic bottlenecks

The most common objection to centralized AI project management in manufacturing is that it will slow things down. The opposite is true when ownership is assigned correctly. Bureaucratic bottlenecks come from unclear decision rights, not from clear ones. If the AI governance function owns standards and integration requirements but line managers retain authority to deploy tools that meet those standards without additional approval, speed increases because the integration questions are already answered.

Assign ownership to someone who already sits at the intersection of operations and technology — typically an operations technology manager, a senior quality engineer with systems exposure, or a lean/continuous improvement lead who has been pulled toward digital initiatives. This person does not need a data science background. They need process discipline, systems thinking, and organizational credibility. The technical complexity of most manufacturing AI deployments is lower than assumed; the organizational complexity is where most programs fail.

Fragmented Pilots vs. Governed AI Programs: Where Manufacturing Ops Actually Win

The compounding advantage of a unified AI workflow strategy

A governed AI program generates compounding returns because every deployment builds on shared infrastructure. Integration work done for one AI tool reduces the integration cost of the next. Data standards established for one use case cover adjacent use cases without rework. Measurement frameworks built for one line apply across the plant with minor adjustments. Fragmented pilots generate none of this compounding — each pilot is a one-time investment that produces a one-time result and then stops.

| Dimension | Fragmented Pilot Approach | Centrally Governed AI Program |

|---|---|---|

| Tool selection | Department-level, ad hoc | Evaluated against plant-wide standards |

| Integration with MES/ERP | Manual workarounds or none | Defined integration requirements before deployment |

| ROI measurement | Anecdotal or not tracked | KPI-linked, tracked by central function |

| Knowledge sharing | Siloed within department | Structured cross-department sharing cadence |

| Time to second deployment | Same effort as first — no reuse | Reduced by 40–60% due to shared infrastructure |

| Quality escape rate | Unchanged or marginally improved | Measurably reduced through consistent AI workflow standards |

Why governed programs consistently outperform department-level experiments

Department-level AI experiments are not worthless — they generate proof of concept and internal credibility. The mistake is treating the pilot as the destination. Every high-performing AI software development team in the technology sector used early pilots to build the business case for a governed program, then moved fast to establish the infrastructure that made pilots repeatable. Manufacturing teams that stay in perpetual pilot mode are not being cautious — they are paying the ongoing cost of fragmentation without collecting the return.

Governed programs also produce better quality outcomes because they enforce consistency. When AI-assisted inspection runs on standardized models with standardized thresholds connected to a shared data pipeline, the results are auditable and improvable. When each line runs its own AI inspection tool with its own local configuration maintained by whoever set it up, you have four different definitions of a defect and no ability to benchmark across lines. Consistency is a quality input. Governance is how you get consistency.

How to Build a Central AI Management Layer in Your Manufacturing Operation

Step 1: Audit current AI tool usage and identify overlap and gaps

Start by mapping what is already deployed — formally and informally. You will find more than you expect. Line engineers running ChatGPT for work instruction drafting. Quality teams using AI-assisted SPC tools they procured independently. Maintenance staff using vendor-supplied AI diagnostics that nobody in IT knows about. Document every tool, the process it touches, the data it uses, and whether it connects to any central system. This audit typically takes two to three weeks and immediately reveals where you are paying for duplicate capability and where critical processes have no AI support at all.

The audit output should be a single-page inventory with five columns: tool name, department, process covered, system integration status, and measured outcome (if any). Most plants completing this exercise for the first time find that fewer than 20% of deployed AI tools have any connection to MES or ERP data, and fewer than 30% have a defined KPI tied to their use. That gap is your starting point, not a reason to pause — it is the map of where centralized AI project management will pay back fastest.

Step 2: Define a governance charter with clear ownership and KPIs

A governance charter does not need to be a lengthy document. It needs to answer five questions: Who owns AI tool standards? What is the evaluation process for new tools? What integration requirements must every AI deployment meet? How will outcomes be measured and by whom? How often will the governance function review and report results? A one-page charter answering these questions, signed off by operations and quality leadership, is enough to start. Complexity can be added as the program matures.

KPIs should be operational, not technical. Avoid vanity metrics like number of AI tools deployed or number of employees trained. Measure defect escape rate, first-pass yield, time spent on manual quality documentation, and unplanned downtime attributable to process failures that AI-assisted monitoring should catch. These are the metrics your executive team already cares about. Tying AI governance directly to them removes the abstraction that makes AI programs feel optional.

Step 3: Standardize integration points between AI tools and existing MES or ERP systems

Integration standards are the highest-leverage output of the governance function. Define three things: what data formats AI tools must accept and produce, which systems are authoritative for which data types, and what the minimum viable integration looks like for a tool to be considered production-ready rather than a pilot. These standards do not need to be technically complex — they need to be consistently enforced. A tool that produces results in a proprietary format that requires manual extraction is not integrated, regardless of what the vendor claims.

For most mid-size manufacturers running SAP, Oracle, or a major MES platform, the practical standard is API-based bidirectional data exchange with the core system of record for the process the AI tool touches. Inspection AI should write results to the quality module. Predictive maintenance AI should write alerts to the maintenance management system. Anything less creates a shadow data layer that grows into a governance problem within 18 months. Set the standard before you have ten tools deployed, not after.

Ready to find AI opportunities in your business?

Book a Free AI Opportunity Audit — a 30-minute call where we map the highest-value automations in your operation.

Three Assumptions About AI Management That Are Costing Operations Teams Time

Misconception: Central AI governance requires a dedicated data science team

This assumption stops more AI programs than any technical barrier. Central AI governance in a manufacturing context is an operational discipline, not a data science function. The tools being governed are increasingly no-code or low-code. The integration work is configuration and API management, not model development. The measurement work is KPI tracking and process auditing — skills that already exist in quality and operations teams. You do not need a machine learning engineer to own AI workflow governance. You need someone with operational rigor and systems awareness.

The data science team misconception also inflates the perceived cost of governance. A lean governance function for a single plant can operate with 10–15% of one senior operational role’s time, supported by a quarterly cross-functional review. This is not a headcount investment — it is a reallocation of existing management attention toward a higher-return activity. The alternative is continuing to pay the coordination tax of unmanaged AI adoption, which typically shows up as duplicated vendor contracts, failed integrations, and pilots that never scale.

Misconception: Standardizing AI tools means slowing down innovation on the floor

Standardization and innovation are not opposites. Standardizing the integration layer and data requirements leaves every department free to evaluate and propose new AI tools within a clear framework. The difference is that when a line engineer wants to deploy a new AI-assisted scheduling tool, the integration path is already defined and the evaluation criteria are already set. The deployment decision takes days, not months. Without standards, every new tool requires a custom integration negotiation, an IT security review from scratch, and a data format conversion project — all of which genuinely slow innovation.

The software industry learned this during the platform era. Companies that standardized their development infrastructure — cloud providers, API standards, deployment pipelines — shipped faster than companies that let every team choose their own stack. Manufacturing AI is at the same inflection point. Governed AI workflow automation accelerates deployment velocity; it does not constrain it. The constraint is the absence of standards, not their presence.

The Operations Leaders Who Move First on This Will Be Hardest to Catch

The next 12 months: where AI workflow maturity separates top-quartile plants from the rest

The manufacturing organizations building centralized AI management infrastructure now are not just improving their current operations — they are creating a structural advantage that compounds quarterly. Every governed AI deployment they complete reduces the cost and time of the next one. Every integration standard they establish covers more use cases as new tools emerge. Every KPI framework they build generates historical data that trains better models and supports better decisions. This is the same compounding dynamic that made AI software development success self-reinforcing in technology firms — and it is available to manufacturing operations that make the same structural investment.

The gap between AI-mature plants and the rest is not yet unbridgeable — but it is closing faster than most operations leaders expect. Analyst projections from Gartner and McKinsey both point to 2025–2026 as the period when AI-driven quality and throughput advantages become visible in publicly reported operational metrics. Plants that are still running fragmented pilots in 18 months will be trying to close a gap against competitors who have two years of governed program data, trained workflows, and integrated infrastructure. That is not a technology problem. That is a strategic one.

The decision in front of quality managers and operations leaders right now is not whether to adopt AI — that decision has already been made by market pressure. The decision is whether to adopt it in a way that compounds or in a way that fragments. Central AI governance is what makes AI software development success transferable to the manufacturing floor. Build the management layer first, and every tool you deploy after that works harder than it would have otherwise.